Sidebar

Table of Contents

Just Another Noise 2 Noise Implementation (JANNI)

JANNI implements a neural network denoising tool described in NVIDIA's noise2noise paper: Noise2Noise: Learning Image Restoration without Clean Data - arXiv

Besides a simple GUI and a commandline interface, JANNI also provides an simple python interface to be integrated into other programs.

Download and Installation

You can find the download and installation instructions here: Download and Installation

Start JANNI

If you followed the installation instructions, you now have to activate the JANNI virtual environment with

source activate janni

You can use JANNI either by command line or with the GUI. Typically, most users prefer to use the GUI (but we will also provide the command line commands in this tutorial). You can start the GUI with:

janni_denoise.py

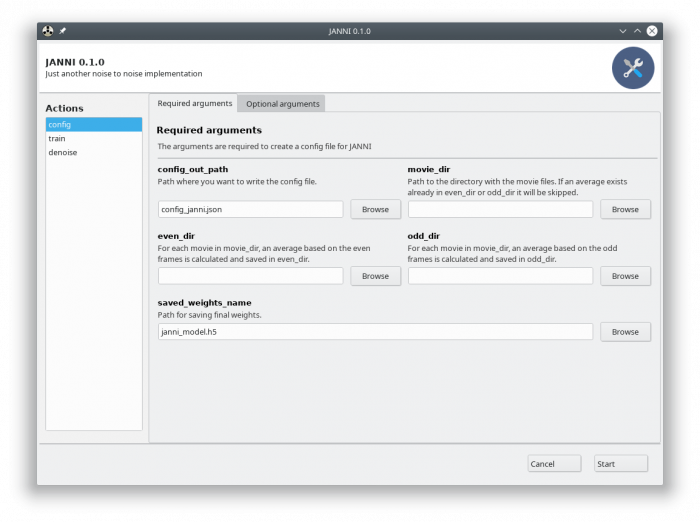

The GUI is essentially a visualization of the command line interface:

On the left side, you can see all available actions:

- config: To create a config file, which is needed to train JANNI.

- train: This action let you train a model for your data.

- denoise: Run this action to apply a model on new images.

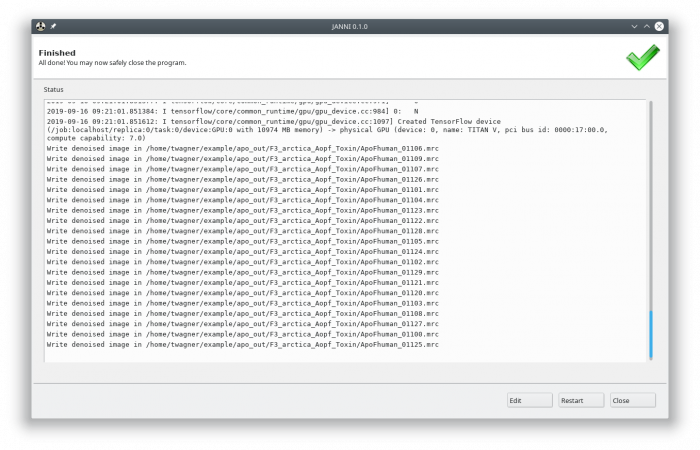

Each action has several parameters which are organized in tabs. Once you chosen your settings you can press “Start,” and the command will be applied and JANNI shows you the output:

It will tell you if something goes wrong. Pressing “Edit” brings you back to your settings, where you can either edit the settings (in case something went wrong) or go to the next action.

Training a model for your data

In case you want to use the general model (Download here), you can skip this part and directly denoise your images.

In case you would like to train a model for your data, you need to copy a few movie files into a separate directory. We typically use at least 30 movies (unaligned) to train the model. Fewer might also work, but more often work much better. Its recommended to copy movies from different data collections into different folders. If you later water do denoise binned micrographs, you should setup binning correctly.

bin.txt in the training folder with the movies that needs to be binned. Write the binning factor into this file (typically 2 or 4).

The next thing you have to do is to create a configuration file for JANNI.

Configuration

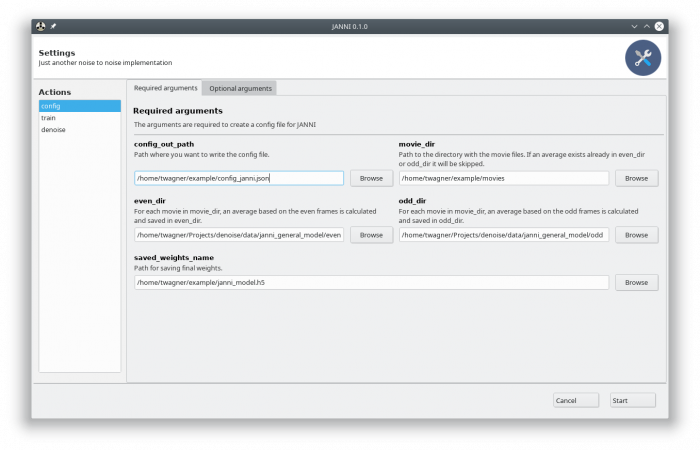

In the GUI choose the action “config” and fill out the required arguments:

Press “Start,” and the config file will be written in the specified config_out_path. You should see the following output:

Generate the configuration file with the command line

Generate the configuration file with the command line

If you would like to use the command line, you can get a description of all parameters with:

janni_denoise.py config -h

The following command will create the same config file as with the GUI:

janni_denoise.py config ~/example/config_janni.json --movie_dir ~/example/movies/ --even_dir ~/example/even/ --odd_dir ~/example/odd/

Training

In principle, you simply have to specify the config file. However, you might want to specify the GPU ID as well. You find the GPU option in the Optional arguments tab.

Press “Start” to run the training and wait for finishing of JANNI. After that, press Edit (where the “Start” button used to be) to prepare for the next step.

Run the training with the command line

Run the training with the command line

To run the training on gpu 0:

janni_denoise.py train config.json -g 0

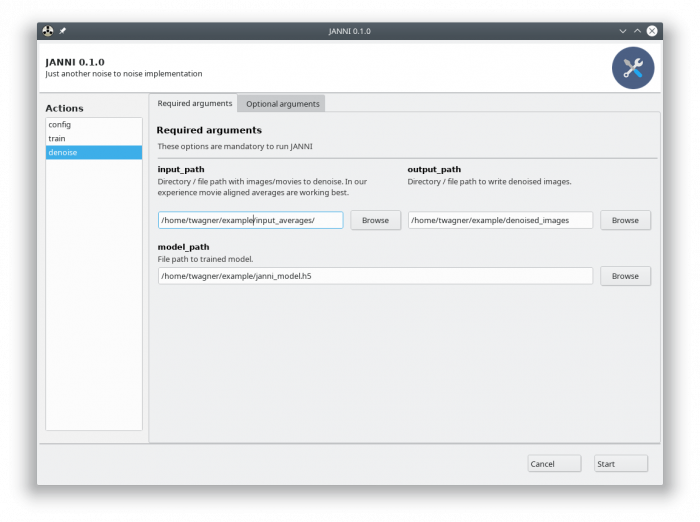

Denoise

With a trained model (either a model trained by you or the general model (Download here) ), you can directly denoise either your movies or averages. In our experience, denoising the motion corrected averages works better. In the GUI select the action denoise and fill the required parameters:

You might also want to change the GPU ID in Optional arguments tab. Then, press the Start button. JANNI will denoise your images at roughly 1s per micrograph.

Run prediction in the command line

Run prediction in the command line

In case you need a description of all available parameters, type:

janni_denoise.py denoise -h

The following command will run the denoise the images in /my/averages/ and save the denoised images in /my/outputdir/denoised/. The denoising here will run on GPU 0:

janni_denoise.py denoise /my/averages/ /my/outputdir/denoised/ janni_imodel.h5 -g 0

Developer Information

Please checkout the jupyter notebook to see how to use JANNI with python.