Sidebar

Table of Contents

The documentation has moved to https://cryolo.readthedocs.io

Picking particles - Using a model trained for your data

This tutorial explains you how to train a model specific for you dataset.

If you followed the installation instructions, you now have to activate the cryolo virtual environment with

source activate cryolo

1. Data preparation

In the following I will assume that your image data is in the folder full_data.

The next step is to create training data. To do so, we have to pick single particles manually in several micrographs. Ideally, the micrographs are picked to completion. However, it is not necessary to pick all particles. crYOLO will still converge if you miss some (or even many).

It depends! Typically 10 micrographs are a good start. However, that number may increase / decrease due to several factors:

- A very heterogenous background could make it necessary to pick more micrographs.

- When you refine a general model, you might need to pick fewer micrographs.

- If your micrograph is only sparsely decorated, you may need to pick more micrographs.

We recommend that you start with 10 micrographs, then autopick your data, check the results and finally decide whether to add more micrographs to your training set. If you refine a general model, even 5 micrographs might be enough.

To create your training data, crYOLO is shipped with a tool called “boxmanager”. However, you can also use tools like e2boxer to create your training data.

To create your training data, crYOLO is shipped with a tool called “boxmanager”. However, you can also use tools like e2boxer to create your training data.

Start the box manager with the following command:

cryolo_boxmanager.py

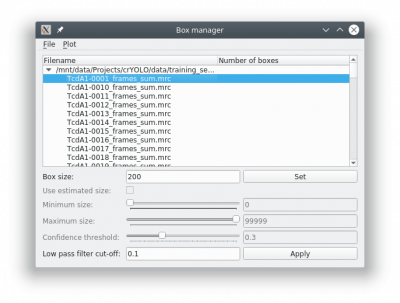

Now press File → Open image folder and the select the full_data directory. The first image should pop up. You can navigate in the directory tree through the images. Here is how to pick particles:

- LEFT MOUSE BUTTON: Place a box

- HOLD LEFT MOUSE BUTTON: Move a box

- CONTROL + LEFT MOUSE BUTTON: Remove a box

- “h” KEY: Toggle to make boxes invisible / visible

You might want to run a low pass filter before you start picking the particles. Just press the [Apply] button to get a low pass filtered version of your currently selected micrograph. An absolute frequency cut-off of 0.1. The allowed values are 0 - 0.5. Lower values means stronger filtering.

You can change the box size in the main window, by changing the number in the text field labeled Box size:. Press [Set] to apply it to all picked particles. For picking, you should the use minimum sized square which encloses your particle.

If you finished picking from your micrographs, you can export your box files with Files → Write box files.

Create a new directory called train_annotation and save it there. Close boxmanager.

Now create a third folder with the name train_image. Now for each box file, copy the corresponding image from full_data into train_image1). crYOLO will detect image / box file pairs by taking the box file and searching for an image filename which contains the box filename.

2. Start crYOLO

You can use crYOLO either by command line or by using the GUI. The GUI should be easier for most users. You can start it with:

cryolo_gui.py

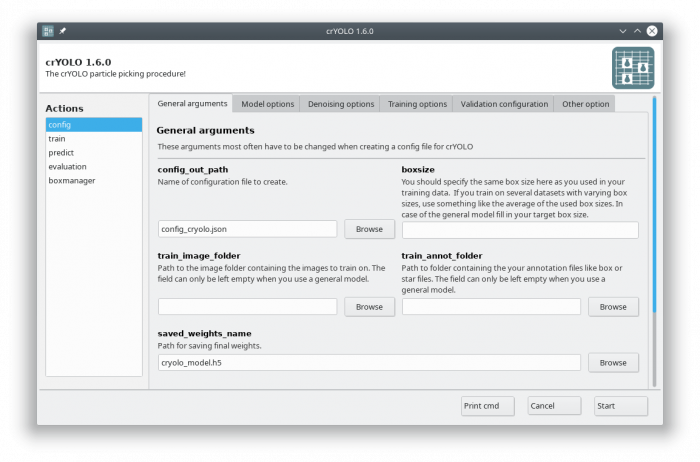

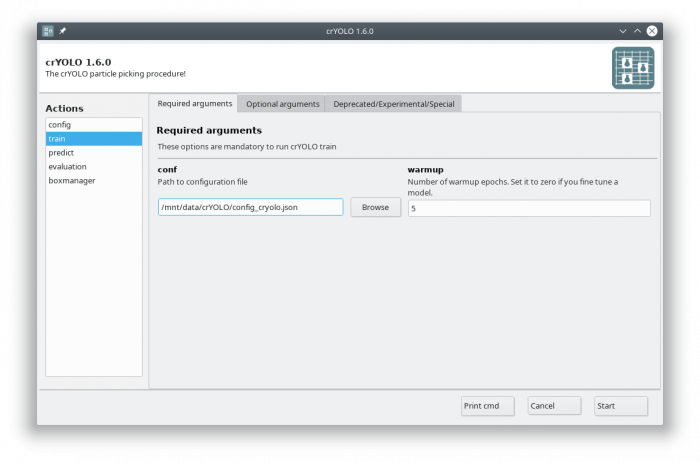

The crYOLO GUI is essentially a visualization of the command line interface. On left side, you find all possible “Actions”:

- config: With this action you create the configuration file that you need to run crYOLO.

- train: This action lets you train crYOLO from scratch or refine an existing model.

- predict: If you have a (pre)trained model you can pick particles in your data set using this command.

- evaluation: This action helps you to quantify the quality of your model.

- boxmanager: This action starts the cryolo boxmanager. You can visulize the picked particles with it or create training data.

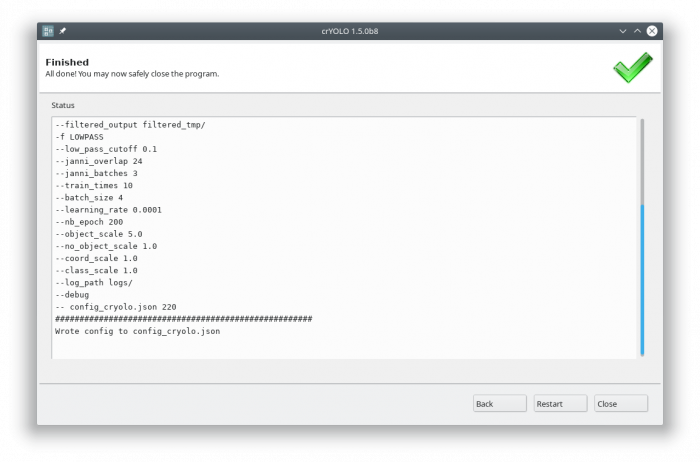

Each action has several parameters which are organized in tabs. Once you have chosen your settings you can press [Start] (just as example, don't press it now ![]() ), the command will be applied and crYOLO shows you the output:

), the command will be applied and crYOLO shows you the output:

It will tell you if something went wrong. Moreover, it will tell you all parameters used. Pressing [Back] brings you back to your settings, where you can either edit the settings (in case something went wrong) or go to the next action.

3. Configuration

You now have to create a configuration file for your picking project. It contains all important constants and paths and helps you to reproduce your results later on.

You can either use the command line to create the configuration file or the GUI. For most users, the GUI should be easier. Select the config action and fill in the general fields:

At this point you could already press the [Start] button to generate the config file but you might want to take these options into account:

- During training, crYOLO also needs validation data2). Typically, 20% of the training data are randomly chosen as validation data. If you want to use specific images as validation data, you can move the images and the corresponding box files to separate folders. Make sure that they are removed from the original training folder! You can then specify the new validation folders in “Validation configuration” tab.

- By default, your micrographs are low pass filtered to an absolute frequency of 0.1 and saved to disk. You can change the cutoff threshold and the directory for filtered micrographs in the “Denoising options” tab.

- When training from scratch, crYOLO is initialized with weights learned on the ImageNet training data (transfer learning3)). However, it might improve the training if you set the pretrained_weights options in the “Training options” tab to the current general model. Please note, doing this you don't fine tune the network, you just change the initial model initialization.

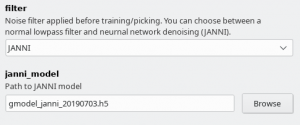

Since crYOLO 1.4 you can also use neural network denoising with JANNI. The easiest way is to use the JANNI's general model (Download here) but you can also train JANNI for your data. crYOLO directly uses an interface to JANNI to filter your data, you just have to change the filter argument in the Denoising tab from LOWPASS to JANNI and specify the path to your JANNI model:

I recommend to use denoising with JANNI only together with a GPU as it is rather slow (~ 1-2 seconds per micrograph on the GPU and 10 seconds per micrograph on the CPU)

I recommend to use denoising with JANNI only together with a GPU as it is rather slow (~ 1-2 seconds per micrograph on the GPU and 10 seconds per micrograph on the CPU)

You can also modify all options and parameters directly in the config.json file. It can be opened by any text editor. Please note the wiki entry about the crYOLO configuration file if you want to know more details.

Click to display ⇲

Click to hide ⇱

To create a basic configuration file that will work for most projects is very simple. I assume your box files for training are in the folder train_annot and the corresponding images in train_image. I furthermore assume that your box size in your box files is 160. To create the config config_cryolo.json simply run:

cryolo_gui.py config config_cryolo.json 160 --train_image_folder train_image --train_annot_folder train_annot

To get a full description of all available options type:

cryolo_gui.py config -h

If you want to specify separate validation folders you can use the --valid_image_folder and --valid_annot_folder options:

cryolo_gui.py config config_cryolo.json 160 --train_image_folder train_image --train_annot_folder train_annot --valid_image_folder valid_img --valid_annot_folder valid_annot

4. Training

Now you are ready to train the model. In case you have multiple GPUs, you should first select a free GPU. The following command will show the status of all GPUs:

nvidia-smi

For this tutorial, we assume that you have either a single GPU or want to use GPU 0.

In the “Optional arguments” tab you can change the GPU that should be used by crYOLO. If you have multiple GPUs (e.g. nvidia-smi lists GPU 0 and GPU 1) you can also use both by setting the GPU argument to '0 1'.

In the GUI you have to fill in the mandatory fields:

The default number of warmup epochs4) is fine as long as you don't want to refine an existing model. During the warmup training epochs it will not try to estimate the size of your particle, which helps crYOLO to converge.

When you start the training, it will stop when the “loss” metric on the validation data does not improve 10 times in a row. This is typically enough. In case you want to give the training more time to find the best model can increase the “not changed in a row” parameter to a higher value by setting the early argument in the “Optional arguments” to, for example, 15.

The final model will be written to disk as specified in saved_weights_name in your configuration file.

Click to display ⇲

Click to hide ⇱

Navigate to the folder with config_cryolo.json file, train_image folder, etc.

Train your network with 5 warmup epochs in GPU 0:

cryolo_train.py -c config_cryolo.json -w 5 -g 0

The final model file will be written to disk.

5. Picking

Select the action “predict” and fill all arguments in the “Required arguments” tab:

In crYOLO, all particles have an assigned confidence value. By default, all particles with a confidence value below 0.3 are discarded. If you want to pick less or more conservatively you might want to change this confidence threshold to a less (e.g. 0.2) or more (e.g. 0.4) conservative value in the “Optional arguments” tab.

However, it is much easier to select the best threshold after picking using the CBOX files written by crYOLO as described in the next section.

Monitor mode

When this option is activated, crYOLO will monitor your input folder. This especially useful for automation purposes. You can stop the monitor mode by writing an empty file with the name “stop.cryolo” in the input directory. Just add –monitor in the command line or check the monitor box in in the “Optional arguments” tab.

After picking is done, you can find four folders in your specified output folder:

- CBOX: Contains a coordinate file in .cbox format each input micrograph. It contains all detected particles, even those with a confidence lower the selected confidence threshold. Additionally it contains the confidence and the estimated diameter for each particle. Importing those files into the boxmanager allows you advanced filtering e.g. according size or confidence.

- EMAN: Contains a coordinate file in .box format each input micrograph. Only particles with the an confidence higher then the selected (default: 0.3) are contained in those files.

- STAR: Contains a coordinate file in .star format each input micrograph. Only particles with the an confidence higher then the selected (default: 0.3) are contained in those files.

- DISTR: Contains the plots of confidence- and size-distribution. Moroever, it contains a machine readable text-file the summary statistics about these distributions and their raw data in separate text-files.

Click to display ⇲

Click to hide ⇱

To pick all your images in the directory full_data with the model weight file cryolo_model.h5 (e.g. or gmodel_phosnet_X_Y.h5 when using the general model) and and a confidence threshold of 0.3 run::

cryolo_predict.py -c config.json -w cryolo_model.h5 -i full_data/ -g 0 -o boxfiles/ -t 0.3

You will find the picked particles in the directory boxfiles.

6. Visualize the results

To visualize your results you can use the boxmanager:

As image_dir you select the full_data directory. As box_dir you select the CBOX folder (or EMAN_HELIX_SEGMENTED in case of filaments).

CBOX files contain besides the particle coordinates more information like the confidence and the estimated size of each particle. When importing .cbox files into the box manager, it enables more filtering options in the GUI. You can plot size- and confidence distributions. Moreover, you can change the confidence threshold, minimum and maximum size and see the results in a live preview. If you are done with the filtering, you can then write the new box selection into new box files. The video below shows an example.

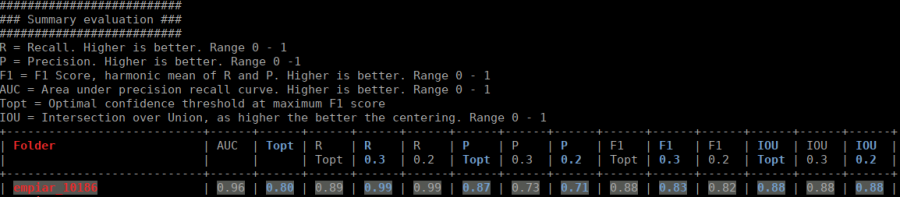

7. Evaluate your results

The evaluation tool allows you, based on your validation micrographs, to get statistics about the success of your training.

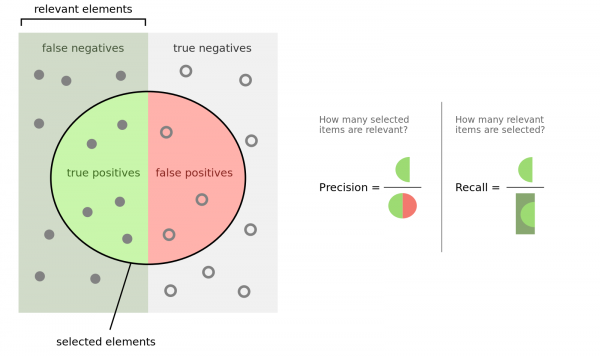

To understand the outcome, you have to know what precision and recall is. Here is good figure from wikipedia:

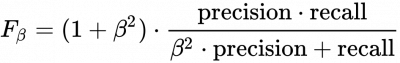

Another important measure is the F1 (β=1) and F2 (β=2) score:

If your validation micrographs are not labeled to completion the precision value will be misleading. crYOLO will start picking the remaining 'unlabeled' particles, but for statistics they are counted as false-positive (as the software takes your labeled data as ground truth).

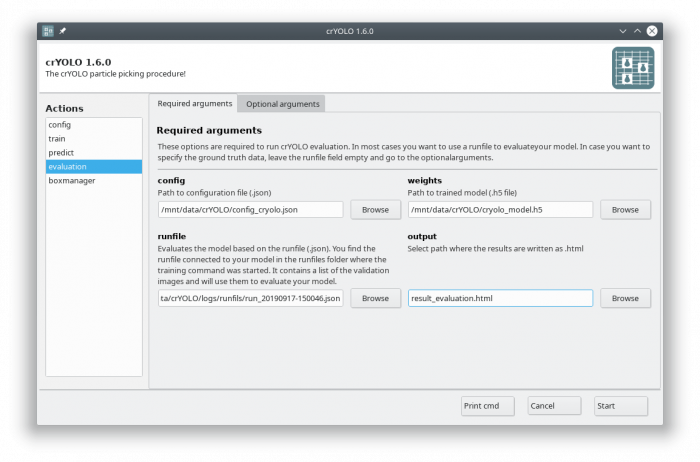

If you followed the tutorial, the validation data are selected randomly. A run file for each training is created and saved into the folder runfiles/ in your project directory. These runfiles are .json files containing information about what micrographs were selected for validation. To calculate evaluation metrics select the evaluation action.

Fill out the fields in the “Required arguments” tab:

Click to display ⇲

Click to hide ⇱

cryolo_evaluation.py -c config.json -w model.h5 -r runfiles/run_YearMonthDay-HourMinuteSecond.json -g 0

The html file you specified as output looks like this:

The table contains several statistics:

- AUC: Area under curve of the precision-recall curve. Overall summary statistics. Perfect classifier = 1, Worst classifier = 0

- Topt: Optimal confidence threshold with respect to the F1 score. It might not be ideal for your picking, as the F1 score weighs recall and precision equally. In single particle analysis, recall is often more important than the precision.

- R (Topt): Recall using the optimal confidence threshold.

- R (0.3): Recall using a confidence threshold of 0.3.

- R (0.2): Recall using a confidence threshold of 0.2.

- P (Topt): Precision using the optimal confidence threshold.

- P (0.3): Precision using a confidence threshold of 0.3.

- P (0.2): Precision using a confidence threshold of 0.2.

- F1 (Topt): Harmonic mean of precision and recall using the optimal confidence threshold.

- F1 (0.3): Harmonic mean of precision and recall using a confidence threshold of 0.3.

- F1 (0.2): Harmonic mean of precision and recall using a confidence threshold of 0.2.

- IOU (Topt): Intersection over union of the auto-picked particles and the corresponding ground-truth boxes. The higher, the better – evaluated with the optimal confidence threshold.

- IOU (0.3): Intersection over union of the auto-picked particles and the corresponding ground-truth boxes. The higher, the better – evaluated with a confidence threshold of 0.3.

- IOU (0.2): Intersection over union of the auto-picked particles and the corresponding ground-truth boxes. The higher, the better – evaluated with a confidence threshold of 0.2.

If the training data consist of multiple folders, then evaluation will be done for each folder separately. Furthermore, crYOLO estimates the optimal picking threshold regarding the F1 Score and F2 Score. Both are basically average values of the recall and prediction, whereas the F2 score puts more weights on the recall, which is in cryo-EM often more important.